TL;DR:

- Modern platforms utilize auto scaling groups with target tracking and step scaling for reliable capacity adjustment.

- Horizontal scaling and container orchestration (AWS, GCP, Azure) efficiently handle traffic spikes and workload diversity.

- Success depends on matching workload types to platform strengths, proper resource sizing, and ongoing policy refinement.

Picking a hosting platform that scales reliably under real production pressure is one of the hardest infrastructure decisions an IT team makes. Traffic patterns shift without warning, business requirements evolve quarterly, and the wrong choice locks you into a cost structure that punishes growth instead of enabling it. Auto Scaling Groups with target tracking and step scaling give modern platforms the ability to adjust capacity dynamically, but knowing which platform fits your workload takes more than reading a feature list. This article walks through concrete examples, measurable results, and a practical decision framework so your team can choose with confidence.

Table of Contents

- How to evaluate scalable hosting solutions

- AWS EC2 Auto Scaling and Lambda: Flexibility for any workload

- Azure AKS and GCP GKE: Containerized scalability in action

- Case studies: E-commerce at scale and hybrid/multi-cloud strategy

- A fresh perspective: Practical wisdom on choosing your scalable hosting partner

- Explore reliable, scalable solutions for your enterprise

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Evaluate with clear criteria | Assess each hosting solution's scalability, reliability, and cost efficiency before making decisions. |

| AWS, Azure, GCP excel in different ways | Choose the provider whose strengths match your workload, traffic patterns, and integration needs. |

| Real-world data beats speculation | Use actual benchmarks and case studies to guide selection—not just feature lists. |

| Right-size to save costs | Adjust your baseline capacity before auto scaling to prevent unnecessary spending. |

| Iterate for success | Continuously monitor, adapt, and evolve your hosting for sustainable reliability and performance. |

How to evaluate scalable hosting solutions

With the scope of the challenge set, let's begin with the essential criteria for evaluating any scalable hosting platform. Not every provider means the same thing when they say "scalable," so defining your terms before you compare options saves real time and budget.

Start with the scalability model. Vertical scaling adds CPU, RAM, or storage to an existing server. Horizontal scaling adds more servers and distributes workloads across them. Most modern enterprise workloads benefit from horizontal scaling because it removes single points of failure and handles traffic spikes more gracefully. Understanding cloud infrastructure explained helps clarify which model fits your architecture before you commit to a provider.

Next, examine elasticity, which is the platform's ability to scale both up and down automatically based on real-time metrics. A platform that scales up fast but scales down slowly will drain your budget during quiet periods. For most workloads, target tracking scaling is the simplest and most effective approach, maintaining a metric target like 60% CPU utilization, while step scaling gives you finer control during nuanced traffic patterns. Use a short scale-out cooldown of around 60 seconds and a longer scale-in cooldown of 300 seconds to avoid flapping.

Here are the core criteria every IT team should evaluate:

- Scalability model: Does the platform support horizontal, vertical, or both?

- Cost efficiency: Is pricing pay-per-use, reserved, or flat rate? How does it behave at scale?

- Reliability: What SLA uptime does the provider guarantee, and what does their track record show?

- Automation: Can scaling policies trigger without manual intervention?

- Integration: Does the platform connect cleanly with your existing CI/CD, monitoring, and security stack?

- Security: Is the provider PCI DSS certified? Do they offer built-in DDoS protection and encrypted storage?

Reviewing types of web hosting options alongside your evaluation criteria helps you match the right hosting model to your actual workload profile. Also consider whether the platform supports predictive scaling, which uses historical patterns to pre-provision capacity before demand peaks, rather than reacting after the fact. For a broader comparison of major providers, evaluating cloud providers' scalability across AWS, GCP, and Azure reveals meaningful differences in how each handles real enterprise loads.

Pro Tip: Right-size your resources before enabling auto scaling. Scaling an oversized baseline instance wastes money at every tier. Benchmark your actual resource consumption under realistic load first, then configure your scaling policies around that baseline.

AWS EC2 Auto Scaling and Lambda: Flexibility for any workload

Armed with these criteria, let's see how leading providers implement them, starting with AWS. Amazon Web Services remains the most widely deployed cloud platform for a reason: it offers multiple scaling mechanisms that fit different workload types without forcing you into a single model.

AWS EC2 Auto Scaling Groups support scheduled scaling for predictable patterns, step scaling for nuanced threshold-based responses, and target tracking for steady-state workloads. You define the metric, set the target, and the platform handles capacity. This flexibility is what makes AWS the default choice for teams managing mixed workloads across production, staging, and batch processing environments. Reviewing cloud solution examples from enterprise deployments shows how organizations combine these policies to cover every traffic scenario.

For bursty or unpredictable traffic, AWS Lambda changes the equation entirely. Lambda auto-scales to thousands of concurrent executions with pay-per-use pricing at $0.20 per million requests after the free tier. You pay only for what runs, which prevents the overprovisioning trap that inflates bills on quiet days. The tradeoff is cold starts. Node.js Lambda functions average 100 to 300 milliseconds for cold start latency, which matters for latency-sensitive APIs but is acceptable for background processing and event-driven workflows.

Key AWS scaling capabilities worth knowing:

- Target tracking: Maintains a chosen metric like CPU at 60%, scales automatically around that target

- Step scaling: Triggers different capacity changes at different metric thresholds for fine-grained control

- Predictive scaling: Uses ML-based forecasting to pre-provision capacity before demand arrives

- Lambda concurrency: Scales from zero to thousands of executions with no infrastructure management

- Metric flexibility: Choose between CPU utilization, ALB request count, or custom CloudWatch metrics

The auto scaling policies you configure directly affect both your cost and your reliability. Getting them wrong in either direction, too aggressive or too conservative, creates real problems. Teams that go through the hosting solution selection process systematically tend to configure better policies from the start.

Pro Tip: For Lambda-based APIs, enable provisioned concurrency on your most latency-sensitive functions. It eliminates cold starts for those specific paths without paying for always-on EC2 instances.

Azure AKS and GCP GKE: Containerized scalability in action

While AWS dominates in flexibility, container-first platforms are game-changers for many organizations. Azure Kubernetes Service (AKS) and Google Kubernetes Engine (GKE) bring managed container orchestration to enterprise scale, removing much of the operational overhead that made Kubernetes intimidating five years ago.

Containers simplify deployment and portability. Your application runs the same way in development, staging, and production, which reduces environment-related failures and speeds up release cycles. Both AKS and GKE handle the Kubernetes control plane for you, so your team focuses on workload configuration rather than cluster management.

Azure AKS enables auto-scaling for high-traffic applications, with one e-commerce platform achieving 99.99% uptime and 30 to 60% performance and cost improvements after migration. That kind of result is not a marketing claim. It reflects what happens when you match a containerized workload to a platform built to run it efficiently. Azure also excels at hybrid integration, connecting on-premises Active Directory environments with cloud workloads through Azure Arc. For organizations with global hosting solutions needs, AKS multi-region deployments provide geographic redundancy with consistent policy management.

| Feature | Azure AKS | GCP GKE |

|---|---|---|

| Kubernetes origin | Managed service | Pioneered Kubernetes |

| Auto scaling | Cluster and pod level | Cluster and pod level |

| Hybrid integration | Strong via Azure Arc | Limited |

| Price-performance | Competitive | Best for analytics workloads |

| Serverless timeout | N/A | 60 minutes (Cloud Run) |

| Best fit | Hybrid enterprise, Windows workloads | Data-heavy, analytics, ML |

GKE pioneered Kubernetes and continues to lead in price-performance for analytics and machine learning workloads. Google Cloud Run, their serverless container option, supports 60-minute timeouts and fast cold start times, making it a strong fit for long-running batch jobs that would time out on Lambda.

"The right container platform is not the one with the most features. It is the one whose operational model matches your team's existing skills and your workload's actual requirements."

For teams building reliable hosting for IT management, AKS and GKE both deliver strong SLAs, but the integration story with your existing toolchain matters as much as raw performance numbers.

Case studies: E-commerce at scale and hybrid/multi-cloud strategy

Seeing how container-focused solutions perform, now examine true enterprise results and best practices from migration projects. Numbers from real deployments cut through vendor claims and show what scalable infrastructure actually delivers.

One e-commerce migration to AWS using EKS and Auto Scaling handled five times the previous user volume, achieved 63% faster page load times, and pushed uptime from 92% to 99.99%. That uptime jump alone represents a dramatic reduction in lost revenue during peak shopping periods. The performance gain came from combining containerized workloads with properly configured auto scaling policies and a CDN layer in front of the application tier.

Multi-cloud strategies are growing in adoption, but they require honest planning. Using AWS for compute, GCP for analytics, and Azure for identity management gives you best-of-breed capabilities. The multi-cloud tradeoffs include higher integration complexity, more vendor relationships to manage, and networking costs between clouds that add up quickly. GCP often delivers 19 to 22% lower total cost of ownership for analytics workloads compared to other providers, which makes it worth including in a multi-cloud strategy for data-intensive operations.

Three practical lessons from successful enterprise migrations:

- Match workload type to platform strengths. Running analytics workloads on a platform optimized for transactional compute wastes money and underperforms. Audit your workload types first, then select platforms.

- Right-size before you scale. Teams that skip this step consistently overspend. Measure actual resource consumption under realistic load before configuring any scaling policy.

- Treat scaling configuration as a living document. Traffic patterns change. Review and adjust your scaling policies quarterly, not just at launch.

"The enterprises that get the most value from scalable infrastructure are not the ones who chose the best platform. They are the ones who built a review process that keeps their configuration aligned with reality."

Reviewing the enterprise hosting guide gives IT teams a structured framework for applying these lessons to their own migration planning.

A fresh perspective: Practical wisdom on choosing your scalable hosting partner

After reviewing tangible platform results, it is worth stepping back for a more strategic take on what really drives scalable infrastructure success. Comparison charts are a starting point, not a decision. Every major provider looks impressive on a feature matrix. What the matrix does not show is how the platform behaves at 2 a.m. when a traffic spike hits a misconfigured scaling policy, or how long it takes to get a knowledgeable support engineer on a call.

Long-term adaptability matters more than current feature parity. The platform you choose today needs to accommodate workloads you have not designed yet. Automation depth, API quality, and the provider's track record of backward compatibility tell you more about long-term fit than any benchmark score.

We have seen organizations run successful pilots on a secondary workload before committing their core infrastructure to a new platform. That approach surfaces integration gaps and operational friction before they become production problems. The hosting selection process should include a phased pilot phase, not just a proof of concept. A proof of concept tests whether something works. A pilot tests whether your team can operate it reliably under real conditions. That distinction changes outcomes.

Explore reliable, scalable solutions for your enterprise

Taking inspiration from proven approaches, here is how to bring scalable hosting to your own infrastructure. The examples above show what is possible when the right platform meets the right workload. Getting there starts with matching your specific requirements to a hosting solution built for reliability and growth.

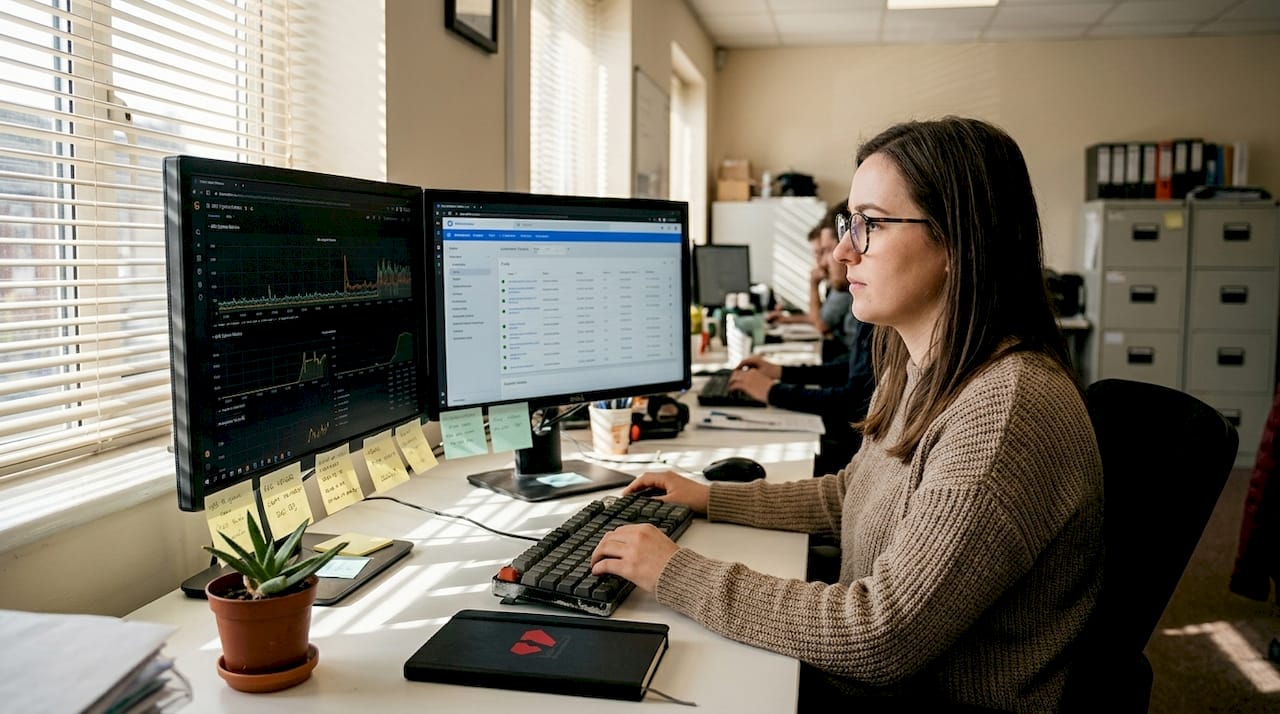

At Internetport, we have been building and managing scalable infrastructure for enterprises since 2008. Whether your team needs dedicated server solutions for consistent high-performance workloads, scalable VPS options with SSD storage and Plesk control panel, or web hosting plans backed by daily backups and free SSL, we have a configuration that fits. Our PCI DSS-certified data centers and redundant systems keep your infrastructure running when it matters most. Contact us to map the right solution to your business needs and get a consultation from our infrastructure team.

Frequently asked questions

What is the difference between vertical and horizontal scaling in hosting?

Vertical scaling adds resources to an existing server, while horizontal scaling adds more servers to distribute workloads across multiple nodes. Horizontal scaling is generally more resilient because it eliminates single points of failure.

Which hosting type works best for unpredictable traffic spikes?

Serverless platforms like Lambda auto-scale to thousands of concurrent executions and charge only for actual use, making them ideal for bursty or unpredictable traffic patterns. They eliminate idle capacity costs entirely.

How does auto scaling improve reliability?

Auto Scaling Groups with target tracking automatically adjust capacity to match real-time demand, preventing both overload and underutilization. This keeps performance consistent without requiring manual intervention during traffic surges.

Is multi-cloud worth the added complexity?

Multi-cloud prevents vendor lock-in and lets you use best-of-breed services, but it increases integration complexity and can raise networking costs between providers. The tradeoffs between providers should be weighed against your team's operational capacity to manage multiple platforms.

How can I avoid overspending when scaling up?

Right-size resources before scaling by measuring actual consumption under realistic load, then configure auto scaling policies around that baseline. Review usage quarterly to catch drift before it compounds into significant waste.